- Jun 25 Tue 2024 10:27

-

c++標準函式庫pair

- Jun 22 Sat 2024 18:57

-

解碼輸入引數 unpacked arguments in Python

- Jun 10 Mon 2024 09:04

-

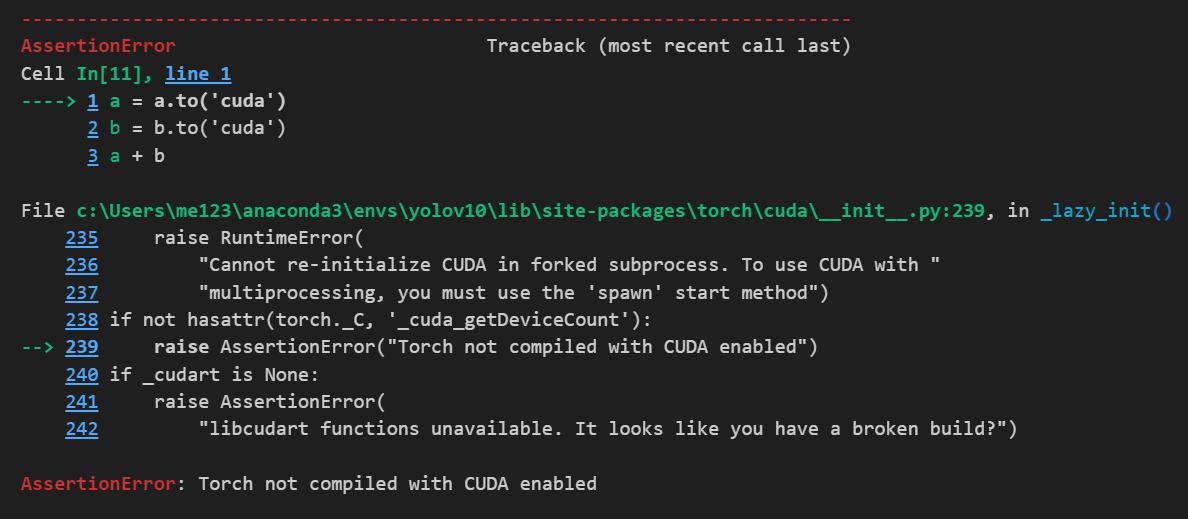

Install pytorch with cuda

- Jun 04 Tue 2024 09:53

-

ROS2 Installation

- Jun 03 Mon 2024 08:47

-

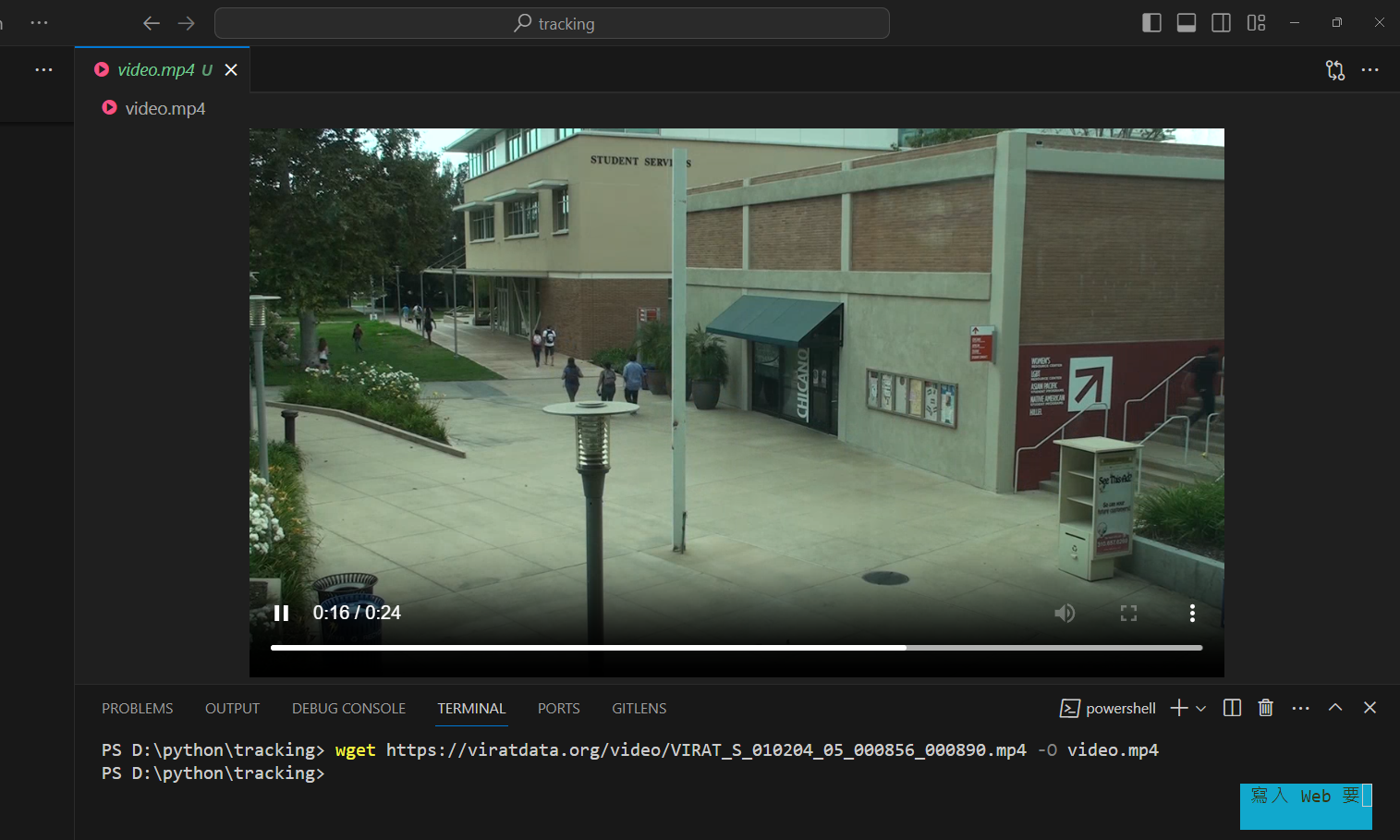

conda環境下安裝yolov10

- May 30 Thu 2024 09:34

-

Book_李宏毅_生成式導論 2024

- May 14 Tue 2024 10:07

-

各種常見隱藏層Deep Learning Neural Network

This type of layer normalizes each channel across a mini-batch.

This can be useful in reducing sensitivity to variations within the data.

(4) layer = reluLayer(Name="relu_1")

Rectified linear unit (ReLU) layer

(5) layer = additionLayer(numInputs)

creates an addition layer with the

number of inputs specified by numInputs. This layer takes multiple

inputs and adds them element-wise

(6) layer = fullyConnectedLayer(outputSize)

creates a fully connected layer.

outputSize specifies the size of the output for the layer.

A fully connected layer will multiply the input by a matrix and then add

a bias vector.

(7) layer = softmaxLayer()

creates a softmax layer.

This layer is useful for classification problems.

- May 10 Fri 2024 08:46

-

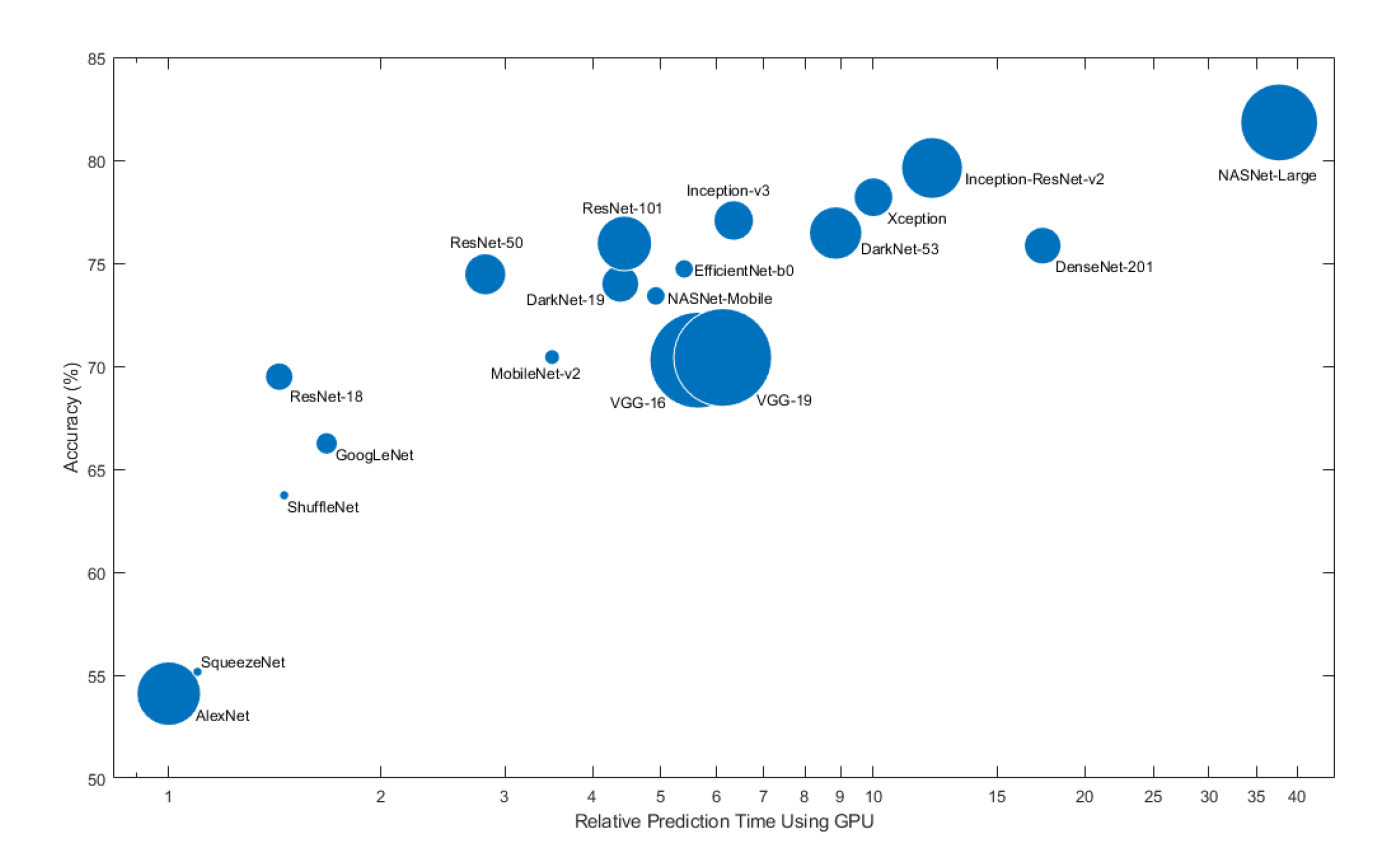

準確率與相對預估GPU時間

- Apr 16 Tue 2024 08:52

-

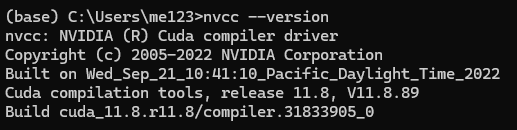

查詢cuda和cudnn版本

- Mar 25 Mon 2024 10:53

-

如何讓程式永遠以系統管理員身分執行?